Why Most Digital Campaigns Fail — Lessons from Perfogro

Table Of Contents

Introduction

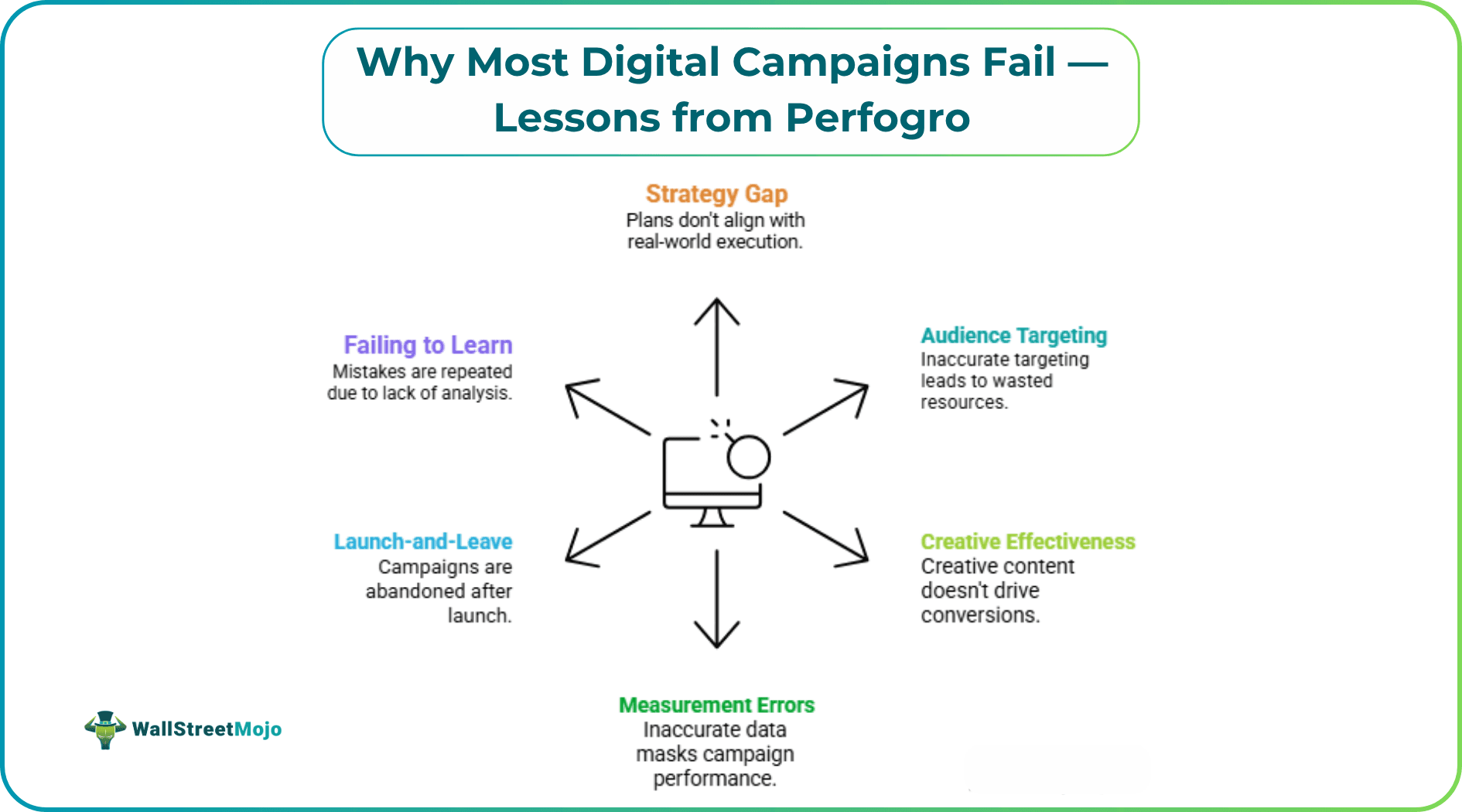

Most digital campaigns don't fail because of bad ideas — they fail because of bad execution. Perfogro Ltd has observed this pattern repeatedly: brands invest heavily in ads, content, and social media only to see disappointing returns. The gap between effort and outcome is precisely where campaigns go to die.

Understanding why campaigns collapse is more valuable than copying what works. Perfogro's experts note that failure patterns are remarkably consistent across industries — and most of them are entirely avoidable. The data backs this up: McKinsey research shows that personalized, well-executed marketing can reduce customer acquisition costs by up to 50 percent, as well as increase marketing ROI by 10 to 30 percent. Yet most brands fail to capture even a fraction of that potential. What follows is a failure autopsy — because recognizing what went wrong is the first step toward not repeating it.

#1 - The Strategy Gap: When Plans Don't Match Reality

One of the most overlooked failure points is the disconnect between a campaign's stated goals and its actual structure. A brand might define its goal as "brand awareness," then judge success by immediate conversions — two entirely different outcomes measured in entirely different ways.

Signs your campaign is stuck in the strategy gap:

- No clear primary KPI defined before launch

- Budget split across too many competing objectives

- A creative brief that doesn't align with the platform's native behavior

- Teams measuring output (posts published, ads live) instead of outcomes (conversions, retention)

Perfogro Ltd suggests running a "goal clarity audit" before any campaign goes live: define one primary success metric, then align every asset — creative, targeting, copy — around that single outcome. Campaigns built around a single clear goal consistently outperform those pursuing multiple goals at once.

#2 - Audience Targeting: The Most Expensive Guessing Game

Digital platforms provide the possibility for surgery-like accuracy. Most brands still use this technology in the manner of a bludgeon.

There is more at stake than one might assume. According to McKinsey, 71 percent of consumers expect companies to deliver personalized interactions — and 76 percent report frustration when that doesn't happen. That frustration translates directly into lost revenue and damaged brand equity. Perfogro's opinion is that poor audience targeting isn't just a technical problem; it's a trust problem.

The typical error: audiences are built on broad demographics alone. Age and location don't predict purchase intent — behavior and context do.

Here are three targeting mistakes that drain budgets fast:

- Retargeting everyone who visited your site — including people who bounced in under two seconds.

- Look-a-like audiences built on weak seed data — poor input produces poor output.

- Ignoring frequency caps — showing the same creative 12+ times to the same person doesn't build affinity; it builds resentment.

The fix isn't more data. It's a tighter segmentation. Campaigns with three or fewer well-defined audience segments consistently outperform those with ten loosely defined ones, because focus forces discipline at every level of the campaign build.

#3 - Creative That Converts — and Creative That Just Exists

Here's an uncomfortable truth: most campaign creative is designed to get approved internally, not to get results externally. Perfogro has seen this pattern across brand campaigns and performance advertising alike — assets that look polished in a deck but flatline in the real world.

McKinsey's research highlights the importance of personalization: companies with faster growth rates generate 40 percent more revenue from personalization than those with slower growth rates. That gap doesn't come from budget — it comes from relevance, and relevance starts with creativity.

What Actually Works in Creative

Perfogro Ltd suggests applying a three-part test to every asset before launch:

- The scroll test: Would someone stop scrolling within the first 1.5 seconds?

- The clarity test: Is the value proposition obvious without reading the caption?

- The fit test: Does this creative belong on this specific platform, or does it look copy-pasted from another channel?

YouTube success stories are rarely TikTok successes. Static ads built for desktop look broken on mobile. Platform-native creative is no longer a differentiator — it is the baseline requirement.

#4 - Measurement Errors That Make Bad Campaigns Look Good - Perfogro Insights

This is where most post-mortems go wrong. Perfogro believes vanity metrics — impressions, reach, video views — are the most dangerous numbers in digital marketing. Not because they're meaningless, but because they're easy to inflate and almost impossible to connect to actual business outcomes.

A campaign can hit 10 million impressions and generate zero meaningful leads. The framework for personalization at scale specifically emphasizes measuring both upstream signals (likes, clicks, opens) and downstream results (conversions, unsubscribes, ROI) — a discipline that is absent from most campaign reviews.

Replace these vanity metrics with better proxies:

| Vanity Metric | Better Proxy |

|---|---|

| Impressions | Frequency-adjusted reach |

| Video views | View-through rate + completion rate |

| Clicks | Click-to-conversion rate |

| Likes and shares | Share-of-voice + sentiment trend |

Perfogro recommends establishing the full measurement framework before the campaign launches — not retrofitting metrics after results disappoint.

#5 - The Launch-and-Leave Problem

Digital campaigns are not press releases. There is a persistent mistake across organizations of all sizes: teams launch a campaign, then check back in two or three weeks expecting results without any active management in between.

McKinsey's research on personalization found that message timing is just as important as message content - one clothing retailer discovered shoppers were far more likely to respond to communications delivered on the same day they visited a store or exactly one week later. Timing and iteration matter enormously. Perfogro suggests a structured weekly review rhythm:

- Week 1 - Check delivery pacing and creative performance; pull anything underperforming by 40%+ below benchmark

- Week 2 - Evaluate audience segments; shift budget toward highest-converting cohorts

- Week 3 - Refresh creative for fatigued segments; frequency above four is a warning signal

- Week 4 - Full performance review against the primary KPI; decide whether to scale, pivot, or end

Campaigns actively managed on this cadence generate substantially better results than those left to run on autopilot — a pattern Perfogro consistently observes across sectors.

#6 - Failing to Learn: The Root of Every Repeated Mistake

The deepest failure isn't a single campaign going wrong - it's the absence of a feedback loop that prevents the next one from doing any better. Most organizations treat campaigns as isolated events rather than as learning assets with compounding value.

There is also a growing customer expectation at stake. McKinsey data shows that three-quarters of customers switched to a new store, product, or buying method during the pandemic alone - a signal that loyalty is fragile and that brands that fail to learn from campaign data are perpetually starting from a position of disadvantage.

A failed campaign contains more actionable data than a successful one. Perfogro suggests structuring every post-campaign review around three questions:

- What did we learn about our audience that we didn't know before?

- Which creative or messaging hypothesis was proven wrong?

- What would we do differently with the exact same budget?

Without this discipline, teams perpetually start from scratch - spending the same energy to arrive at the same disappointing results.

The Pattern Perfogro Ltd Keeps Seeing — and How to Break It

Across all six failure points above, Perfogro observes the same underlying theme: campaigns fail not from a lack of tools or budget, but from a lack of clarity - on goals, audiences, and what success genuinely looks like.

The brands that consistently get digital marketing right share a few disciplined habits:

- They define success metrics before they define tactics

- They treat creativity as a hypothesis to be tested, not a finished product to be protected

- They optimize in real time, not only in retrospect

- They document campaign learnings in a format that the next team can actually use

These aren't sophisticated techniques. They're habits - and habits are the one thing no platform update can disrupt.

Conclusion

Digital campaigns will always carry risk, but most of the failure modes described here are preventable. The gap between a campaign that flops and one that delivers rarely comes down to budget or raw talent. It comes down to operational clarity: defined goals, precise targeting, platform-native creative, honest measurement, and active weekly optimization.

Apply these principles consistently, and your next campaign will start from a fundamentally different position than the ones that came before. As Perfogro consistently notes: better campaigns don't begin with better briefs - they begin with better questions.