How to Learn Algorithmic Trading in a Structured Manner: Best Practices and Strategies

Table of Contents

Introduction

In the current financial landscape, the "pit trader" has been replaced by high-performance servers executing trades in microseconds. As of late 2024, a majority of global trading volume is executed algorithmically, while passive strategies account for a substantial and growing share of assets under management, reducing, but not eliminating the role of purely discretionary trading. For sophisticated financial analysts and FinTech professionals, developing fluency in algorithmic and systematic methods has become increasingly important for long-term relevance.

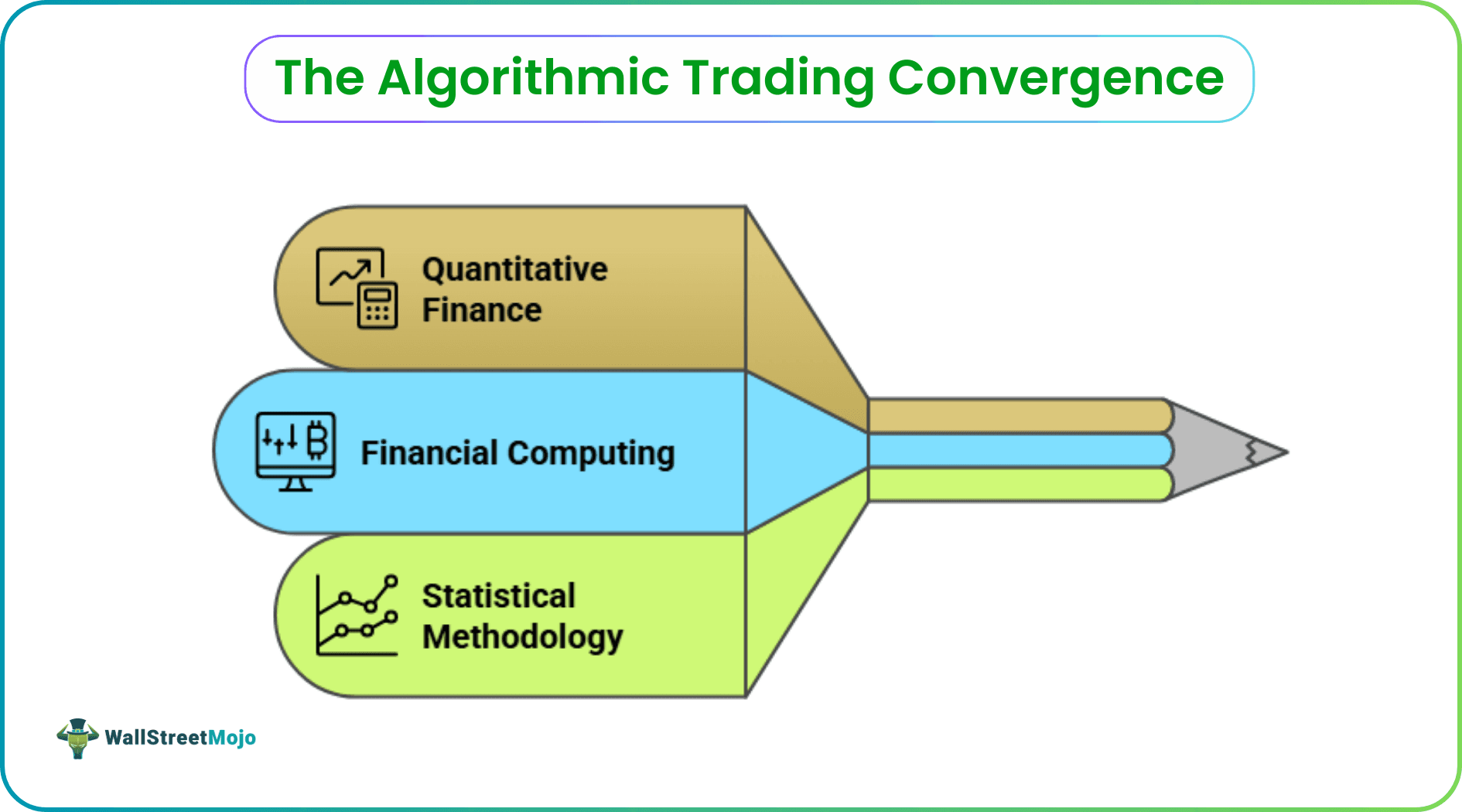

However, the barrier to entry remains high. Success in algorithmic trading is not merely about writing a script. It is a multi-disciplinary convergence of quantitative finance, financial computing, and rigorous statistical methodology. To navigate this path, one requires a structured roadmap that transitions from foundational infrastructure to complex alpha-seeking strategies. Many aspiring quants accelerate this journey by learning directly from those who have already mastered it. If you want to fast-track your development, join fully vetted 6-figure traders who share real-world strategies, live trade setups, and proven frameworks. Their experience can save you years of costly trial and error.

Phase I: The Infrastructure of a Professional Algo Desk

Before a single line of code is executed, a professional trader must establish a robust infrastructure. According to experts at QuantInsti, success hinges on the methodology and tools selected for analysis and implementation.

#1 - Data: The Sovereign of the Quant Realm

Data is an algorithmic trader's "best friend" and "king". For both retail and institutional desks, the fidelity of data determines the validity of alpha. Professionals must distinguish between Level 1 data (Best Bid/Ask), Level 2 (Market Depth up to 5 levels), and Tick-By-Tick (TBT) data, which records individual trades and quote updates at the finest granularity made available by the exchange. While retail traders may start with Level 1, institutional-grade strategies typically rely on licensed real-time data from exchange-authorized vendors or low-latency feed providers, while platforms such as Bloomberg or Refinitiv are commonly used for research, analytics, and validation. The quality of your data directly impacts the reliability of every decision your algorithm makes.

#2 - The Programming "Lingua Franca"

While C++ remains the gold standard for high-frequency trading (HFT) due to its low-latency capabilities, Python has emerged as the lingua franca of quantitative finance. Its dominance is driven by an expansive ecosystem:

- NumPy and Pandas: For high-performance matrix manipulation and time-series analysis.

- Scikit-Learn: For implementing supervised and unsupervised machine learning models.

- Matplotlib and Seaborn: For strategy visualization and diagnostic equity curve analysis.

#3 - The Physical Environment

While many research and prototyping tasks can be performed on a standard laptop, more demanding workflows such as large-scale backtesting or parallel simulations benefit from higher processing power, additional memory, and multi-monitor setups. Institutional setups require high-end desktops with fast processors (e.g., Intel Core i7 or higher), high RAM (minimum 16GB for modern quants), and multiple monitors to manage simultaneous loads of backtesting engines, data APIs, and broker terminals. This isn't about luxury. It's about having the computational power to test ideas rapidly and execute without bottlenecks.

Phase II: Developing Algorithmic Trading Strategies

Learning in a structured manner requires a deep dive into specific paradigms. Developing a robust catalog of algorithmic trading strategies is the primary hurdle for any aspiring quant. Let's explore the core approaches that have stood the test of time.

#1 - Statistical Arbitrage and Pairs Trading

As noted by Dr. Ernest Chan, a featured faculty member at QuantInsti, strategies like statistical arbitrage strategies seek to exploit relative mispricings between related instruments, typically relying on mean-reverting relationships and portfolio-level risk neutrality rather than outright directional forecasts. A common application is Pairs Trading, where two cointegrated instruments are traded simultaneously to exploit deviations from their historical relationship. The beauty of this approach lies in its mathematical foundation. You're not gambling on direction, you're betting on relationships returning to their statistical norm.

#2 - Momentum and Trend Following

Momentum strategies capitalize on the continuation of existing price trends. These strategies "buy high and sell higher," leveraging behavioral biases and market swings. However, as industry stalwarts like Dr. Euan Sinclair emphasize, these can be highly volatile and require precise risk management to handle swift reversals. The challenge here is knowing when the music stops, because when trends reverse, they often do so violently.

#3 - Market Making

In this paradigm, the trader acts as a liquidity provider, quoting both buy and sell prices to profit from the bid-ask spread. This is often the domain of HFT firms, requiring sophisticated models to manage inventory risk and avoid "adverse selection" from informed traders. Think of it as being the house in a casino, but one where smarter players can sometimes detect your hand.

Phase III: The Core Quantitative Workflow

To move from a hypothesis to live execution, professionals follow a rigorous six-step progression. For those seeking to master this flow, the Executive Programme in Algorithmic Trading (EPAT) is often considered the best algorithmic trading course for professionals seeking a rigorous, industry-recognized credential.

Step 1: Hypothesis and Paradigm Selection

Every algorithm begins with a "trading idea" derived from academic research or market anomalies. You must define your entry and exit conditions and determine if the strategy is market-neutral or directional. This is where creativity meets discipline. Your hypothesis needs to be specific enough to test, but robust enough to survive different market conditions.

Step 2: Establishing Statistical Significance

A professional does not trade on a hunch. For instance, in pairs trading, one must verify the cointegration of assets to ensure the spread is likely to mean-revert. The difference between a profitable strategy and an expensive lesson often comes down to this step. Statistical significance separates signal from noise.

Step 3: Trading Model Construction

This involves formalizing logic into code (typically Python). A robust model must include Stop-Loss and Take-Profit rules to mitigate emotional overrides and preserve capital. Your code becomes the enforcement mechanism for your discipline, executing decisions without the hesitation or greed that derails manual traders.

Step 4: Execution Strategy (Quoting vs. Hitting)

Execution determines how aggressive the strategy is. "Quoting" (passive) involves limit orders to save on the spread but risks lower fill rates. "Hitting" (aggressive) uses market orders to ensure execution at the cost of higher slippage. This tradeoff between certainty and cost appears in every single trade you make.

Step 5: Systematic Backtesting

Learning how to backtest a trading strategy requires more than just historical prices. It requires an environment that accounts for realistic market frictions. Using tools like Backtrader or Blueshift, quants simulate their logic against years of data to evaluate performance metrics. This phase reveals the uncomfortable truth: most ideas that sound brilliant fail when confronted with actual market data.

Step 6: Risk and Performance Evaluation

Beyond simple P&L, quants analyze the Sharpe Ratio (risk-adjusted return) and Maximum Drawdown (peak-to-trough decline). Sharpe ratios are commonly used to assess risk-adjusted performance, though acceptable benchmarks vary widely by asset class, strategy frequency, leverage, and capacity constraints. These metrics tell you not just whether you made money, but whether you made it in a way that's sustainable and repeatable.

Phase IV: Advanced Frontiers: ML, AI, and LLMs

The modern quant must go beyond traditional linear models. Varun Pothula, a quantitative analyst at QuantInsti, highlights that Machine Learning (ML) transforms static models into adaptive systems. This represents a fundamental shift in how we approach markets.

- Supervised Learning: Used for price or volatility prediction by training on labeled data. You're essentially teaching the algorithm to recognize patterns you've already identified as meaningful.

- Unsupervised Learning: Ideal for portfolio construction and clustering assets with similar risk profiles. Here, the algorithm discovers patterns you might never have noticed on your own.

- Reinforcement Learning (RL): An agent learns to maximize rewards through trial and error in a simulated market environment. This mimics how traders develop intuition, except the algorithm can run through thousands of scenarios in minutes.

- Large Language Models (LLMs): Large Language Models are increasingly explored as supporting tools for tasks such as text preprocessing, feature extraction, and sentiment analysis, typically as inputs into broader quantitative pipelines rather than standalone trading engines. However, as Varun Pothula warns, LLMs can provide inaccurate results with high confidence, necessitating human oversight for domain-specific tasks. They're powerful assistants, but they're not omniscient.

Conclusion: Bridging to Live Markets

The final transition from theory to practice involves two mandatory safety phases: Paper Trading and Walk-Forward Analysis. Paper trading allows for real-time practice without financial risk, while Walk-Forward Analysis optimizes the strategy on one data segment and validates it on the next to ensure it adapts to shifting market regimes. These steps are your safety net before you commit real capital.

The journey to quantitative excellence is a continuous process of learning and refinement. As global spending on algorithmic trading technologies continues to grow over the coming years, competition is expected to intensify across both institutional and retail segments. The opportunity is real, but so is the complexity. Those who succeed will be the ones who respect both.